I suspect that they recorded the compensated data which is a bad idea. The MS5607 data sheet has details on performing the calculations but if you do them on board and drop a bit somewhere it is very hard to pick it up again.

If they had the raw data then they could see if it were a sensor problem or a data crunching problem.

Verifying that the math is done correctly is straightforward, both by comparing to their examples and by verifying constant ambient pressure as you heat and cool the unit into different operating ranges, both of which have been done with the Raven. Re-reading the report, I see that they didn't use the Raven's baro data recording. Not sure why, since it's the same sensor they used, but recorded twice as often. I also see a statement that isn't quite correct: "The Raven interpolates its readings to the timescale of its axial acceleration sensor, which is both the most frequently recorded source and the only data from the Raven used in the final results." Looking at the graphs of the baro data in the FIP it appears interpolated, but that's just the display. The data samples are recorded on board at their own sampling rate. If you select the parameters you want to export to a spreadsheet and right-click in the parameter selection box, you'll see an option for copying the data to paste into Excel. When you do that you'll see that each sample from every measurement gets its own timestamp at the time it was recorded.

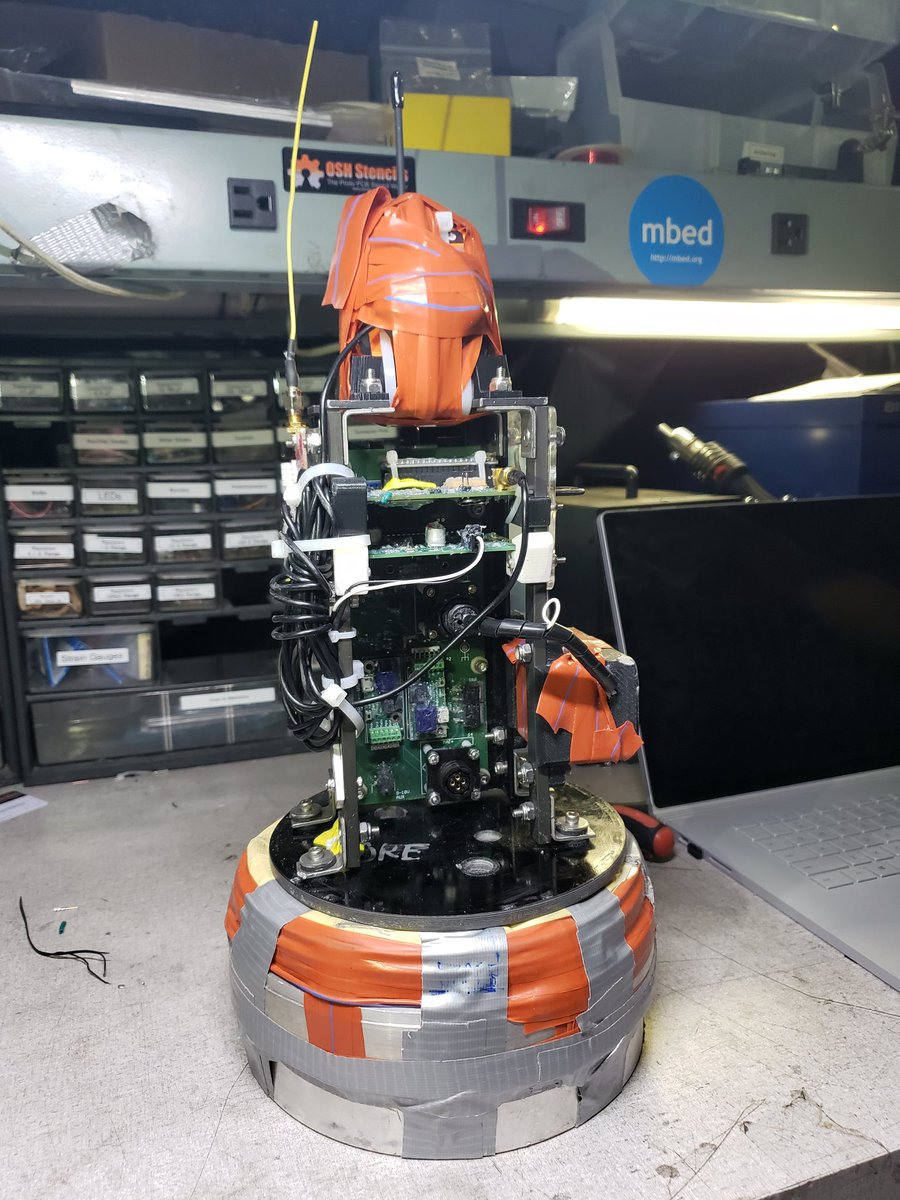

The Ravens were configured as timers (perhaps the Raven3 performance scared them?) but they were a backup to their custom "HAMSTER" avionics package. GPS apogee detection appears to have been the primary mode with the timer backup doing the work in this case.

They flew Raven 4s, rather than Raven3s. As far as I know, the Raven4 performed the only apogee deployment. They used one of the deployment channels as a timer, which is what I have been recommending for flights over 120kft. The Raven 4s have much-improved accelerometer accuracy compared to all previous Raven versions. On this flight, if they had used the Raven 4's accel-based apogee estimate rather than a timer, it would have done the apogee deployment in the exo-atmospheric part of the flight. That's not saying a lot though, since the middle 240 seconds of this flight were over 100 kft. The rocket was reading a small negative fraction of a G before the deployment, which mostly went away after deployment, which would be consistent with centrifugal acceleration from a tumble. That small negative G reading caused the on-board estimate of apogee to be about 20 seconds earlier than apogee, which again wouldn't have made any difference since the altitude at that time was over 300,000 feet, and the density at 280,000 feet (the highest altitude I have a reference for) is 5 millionths what it is at sea level. If it's true that an exo-atmospheric tumble caused those small negative-G readings, then the Raven4's accel-based apogee estimate was right on the money. I did an accel calibration the night before I express-mailed them their units, using the standard accel cal process in the Featherweight Interface Program. Their units were stock except for high-altitude firmware which allows for longer timer durations, higher altitude thresholds, and has more filtering on the baro sensing for the deployment logic. So for people planning a flight over 100 kft with a Raven4, it looks like the on-board apogee estimate is now probably accurate enough for that task. I won't be really confident in that though, until I see more data from flights between 30k and 150kft where there is baro and GPS data that clearly shows the apogee time.